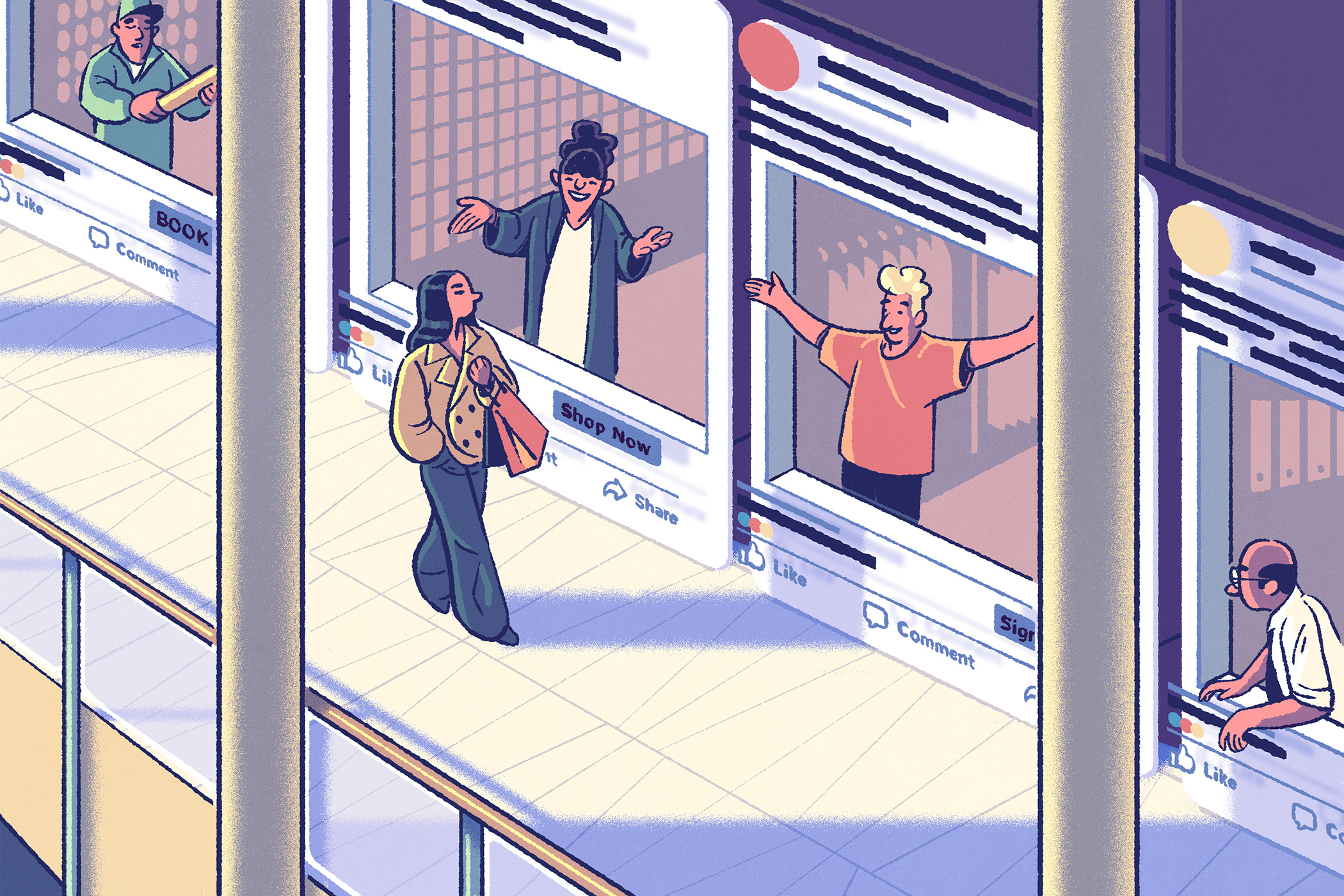

The ubiquity of social-media apps makes them fertile ground for researching the dynamics of modern life. Kellogg faculty explored the good and the bad of social media—from misinformation and prejudice to business models and influencer marketing—and how those features both reflect and shape the offline world.

In the weeks leading up to an election, it often feels like we can’t escape political news. But Kellogg’s Guy Aridor and colleagues found that most people’s smartphones are politics-free—even during a U.S. presidential race.

The researchers used an app that tracks how often certain keywords appear across people’s smartphone apps, including email, messaging, social media, news, music and video streaming, and web browsers. From a list of over 500 election-related terms and politician’s names, they found that the median individual only encountered 13 of the keywords on a typical day in fall 2024.

That’s less than half of the terms a person would see in reading just a single news article about the election. And only 5 percent of the people in the study were exposed to political terms from news apps or websites.

“Apart from the very politically engaged, people don’t open the news at all,” Aridor says. “They’re getting election exposures sporadically throughout the day. So, the low amount of engagement that we find is not from intense bouts of political news consumption.”

The researchers further found that this low exposure wasn’t due to social-media platforms algorithmically suppressing political content. Instead, it’s largely driven by users’ personal preferences on which content they choose to view—which is rarely politics.

“Everyone’s in kind of their own little world about the types of content that they see,” Aridor says.

Misinformation can spread like wildfire on social media, despite efforts by platforms and regulators to moderate and label inaccurate content. Research by Kellogg’s William Brady and colleagues found one reason misinformation is so flammable online: outrage.

Across ten studies analyzing more than a million social-media posts, the researchers found that misinformation was more likely to trigger outrage than trustworthy news.

Outrage, in turn, drives people to share these often-misleading social-media posts. And it makes them more willing to do so without actually reading the article linked in the post, even if they are otherwise good at identifying misinformation.

“We actually find people are not terrible at discerning between misinformation versus trustworthy news,” Brady says. “But here’s the key: if you give them an outrage-evoking article with misinformation, that ability they have, it goes out the window; they are more likely to share it anyway.”

Even people who disagree with the misinformation they see on social media might unintentionally be helping to promote the content simply by interacting with it, Brady notes.

“The misinformation ecosystem is not just driven by user behavior; it’s also driven by algorithms,” he says. “When you engage with misinformation—even in disagreement—you’re actually contributing to the increase of misinformation in the ecosystem because you’re letting the algorithm know that it’s drawing engagement.”

Because of their skill in attracting engagement, influencers are a big deal on social networks and in the world of marketing. But while the common perception of influencers is of people ostentatiously displaying their expensive purchases and carefree lifestyle, research suggests that this behavior can backfire.

A study by Kellogg professor Maferima Touré-Tillery and alumna Jessica Gamlin found that people are less likely to connect with content creators who post about self-indulgent behavior, for example, eating junk food or binge-watching TV.

The researchers labeled this phenomenon the “bad-influencer effect.”

Influencers who used hashtags such as #selfcontrol and #willpower had, on average, a significantly higher number of followers than those who used hashtags like #indulge and #indulgence. And users were less likely to follow accounts that posted about binge-watching TV shows before bed or accounts that used curse words.

“If I have a goal to be productive today, and I scroll past someone who’s talking about mindlessly watching Netflix, I’m probably not going to connect with them,” says Gamlin. “Instead, I’ll avoid socially connecting with them to try to get back on track with my goal to be productive.”

Throughout their studies, the researchers found evidence of a strong association between a participant’s commitment to a personal goal and their willingness to connect with a content creator.

“Naturally, people seem to be more open to the advice and recommendations of those with whom they feel connected, whether this feeling of connection is mutual or only one-sided,” Touré-Tillery says.

The “follow-back”—where a user follows an account that recently followed them—is common practice on social media, allowing people to make new connections and expand their network. And while it’s often the result of a split-second decision, it can still reveal unconscious biases.

That’s what Kellogg’s Maryam Kouchaki found when she joined a team of researchers examining how race and politics affect the likelihood of a follow-back. The team created 18 X profiles, split evenly between Black and white identities and liberal, conservative, or neutral political beliefs. Over two weeks, they used those accounts to follow 6,000 accounts on X.

When they looked at the frequency of follow-backs, they found that race played a role. People were 24 percent less likely to follow back accounts owned by Black people than white people, and the pattern held true whether the people making the decision were conservative or liberal.

“Even with extremely liberal users, you still find the racial bias,” Kouchaki says.

The researchers suggest that this result is an example of automatic thought processes that emerge in situations where the motive to appear unprejudiced is low. People, regardless of their political leaning, may be subject to more bias during these actions.

“While prior research shows that liberals are more likely to self-report being less racist and care about these issues, this study shows that in making a quick, intuitive decision where they don’t know that they are being watched, that is not true,” Kouchaki says.

Many of the largest social-media platforms built their user base by offering their product for free. But growing concern among users and regulators over data privacy has led some platforms to shift to a subscription model where less user data is collected or sold, or to a hybrid of paid and free options.

Kellogg’s Sarit Markovitch and a colleague built a model to weigh these options and determine when each business model makes the most sense.

They found that when the value of the data a platform collects from its users and the importance of network effects to the platform’s features are strong, offering an app for free makes sense. But if either of these factors is weak, a subscription model is the smarter play.

“[Companies] should always be thinking about the commercial value of their data and the strength of their network effects,” Markovich says. “If these change, their strategy should change as well.”

The model also offers insight on how policymakers can best regulate platforms and protect consumers. Discouraging hybrid “pay for privacy” models—as some European agencies have attempted—would likely reduce competition, drive up prices, and incentivize more-aggressive data collection.

“Regulators should recognize that there isn’t a one-size-fits-all solution,” Markovich says. “Protecting privacy does not always require ‘big gun’ tools, like banning entire business models. In many cases, simpler measures like price caps can better protect privacy. By limiting subscription prices, you can make choosing privacy more affordable and accessible for users.”